I recently had some thoughts about an aspect of Friston's free energy principle, specifically about what happens when multiple agents are performing active inference to predict future states of their environment and start modeling the behavior of other agents.

The free energy principle, in short, suggests that biological agents act to minimize the difference between their predictions and actual sensory input, essentially reducing uncertainty about the world through perception and action. Active inference is the mechanism through which agents do this, by continuously updating internal models and acting on the world to bring it in line with those predictions.

When there are multiple agents that perform this task well and any new genetic advances in are disseminated quickly (i.e. there's strong selection for modeling of other agents), prediction of future behavior is rendered useless for adversarial inter-agent competition as any prediction made by one agent can be anticipated by the other.

In simple words, if I know what my opponent is going to do, then my opponent also knows what I think they will do, so they can just do the opposite, and any predictability vanishes.

But it doesn’t stop there. If I know that they know what I think they’ll do, then they know that too, and so on.

This recursive loop leads to an unstable modeling stack, where the higher the level of mutual inference, the less actionable information there is to exploit.

The agents essentially neutralize each other's predictive edge, leading to a kind of behavioral noise or stalemate where strategic advantage evaporates.

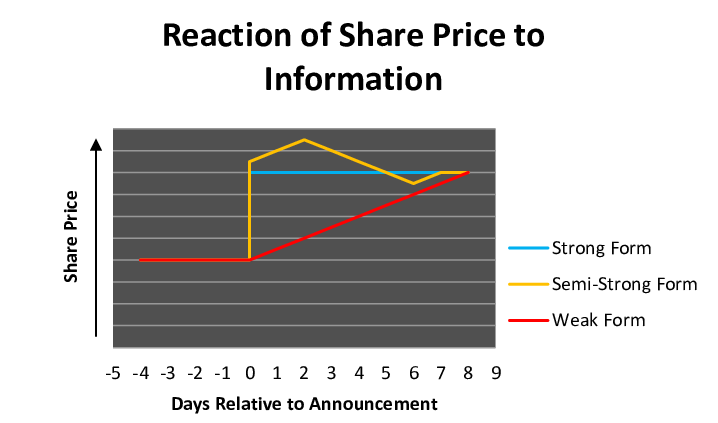

This situation mirrors an efficient market, where any widespread knowledge about future prices is "priced in" immediately and thus removes any future predictability.

The Efficient Market Hypothesis, in essence, says that if information is widely known and quickly disseminated, then any opportunity to profit from it disappears almost instantly. In an efficient market, the collective modeling power of all participants ensures that prices already reflect all available knowledge. So if you think you've spotted a pattern or a signal about what's going to happen next. Chances are, so has everyone else, and it's already been priced in. There’s no edge left to exploit. Any predictability is arbitraged away the moment it emerges.

Over evolutionary time, the ability to model the intentions and likely actions of adversaries offers a clear advantage. If an agent can anticipate and preempt the moves of others, whether in hunting, defense, or competition for resources, it gains a fitness edge. This drives an evolutionary arms race: as one agent improves its modeling capabilities, others must follow suit or be outmaneuvered. Each upgrade in inference power by one party becomes the new baseline the others must adapt to. Over time, the competitive space becomes saturated with highly capable modelers, and just like in an efficient market, the informational advantage begins to vanish.

This train of thought also leads to a couple interesting hypotheses:

-

In an adversarial encounter, the quality of one’s inference in absolute terms is not advantageous, only the delta compared to one's opponent.

-

Collaboration between agents on the other hand benefits from an agent’s ability to infer future states of the environment or behavior of other actors in absolute terms.

-

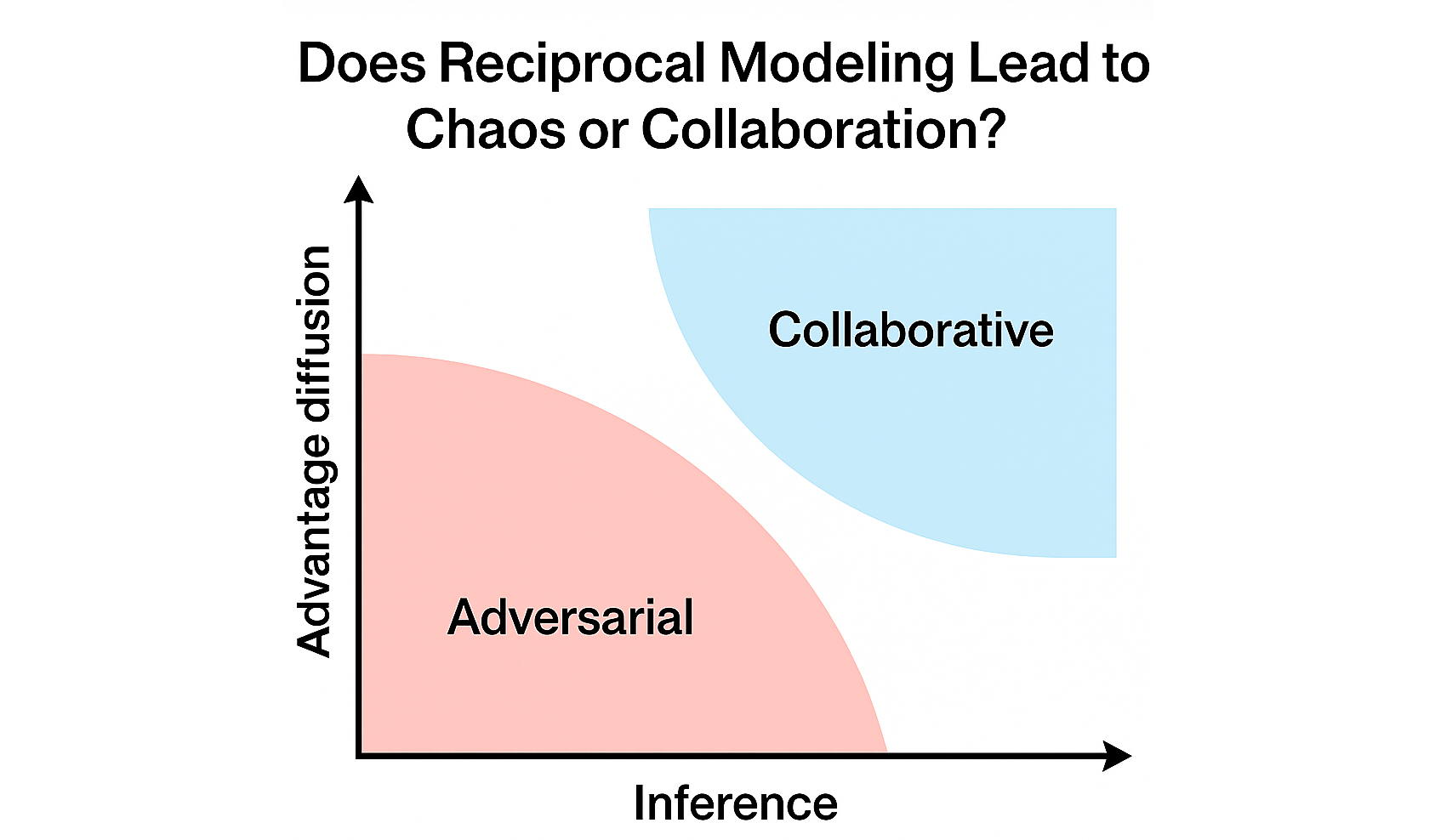

In a situation where an agent is faced with the policy choice collaborate vs. antagonize, there could be a phase change depending on inference ability and rate of information/gene spread.

When inter-agent inference is poor and improved modeling genes or abilities spread slowly, an adversarial attitude is preferable, and vice-versa for collaboration. At a certain threshold, when everyone can predict everyone else to a high degree and information spreads rapidly, further improvements yield diminishing returns in adversarial settings. That’s the tipping point. In such conditions, collaboration becomes not just more stable, but more evolutionarily advantageous. Mutual modeling shifts from being a weapon to being a bridge. Instead of using predictive power to outsmart, agents can use it to synchronize, coordinate, and reduce conflict, thus forming the basis for trust and shared goals.

This idea could be extended to a continuous model where these parameters can vary over space and time: In a reaction-diffusion system one could see self-organization into collaborating portions with adversarial borders in between. Additional non-linear (with respect to group size) benefits from collaboration like improved survival or a lower risk from adversarial encounters or predation could also be included here.

One fascinating implication of this dynamic is that it might help explain the emergence of multicellular life. Single-celled organisms began as independent agents, potentially in competition. But once their ability to model chemical gradients, environmental signals, and each other reached a certain complexity, cooperation offered a better payoff than continued arms races. Cells that could infer the behavior of their neighbors and align their activity accordingly were more likely to survive and reproduce. Eventually, cooperation hardened into structure leads to the first self-organizing, collaborative collectives: multicellular organisms.

As these multicellular agents then start modeling each other, the same process repeats. Individuals that could anticipate the actions of not just predators or prey, but also gain a benefit from modeling kin. But just like before, as modeling capabilities becomes more refined and more common, the predictive advantage begins to erode.

At this point, the same evolutionary dynamic kicks in: when adversarial modeling becomes saturated and costly, cooperation becomes the more stable and scalable strategy. Organisms start to group: not just out of necessity, but because coordinated behavior was easier when everyone could model everyone else with sufficient fidelity. The herd becomes an information-sharing network. The tribe, a shared predictive system. Bands of humans hunting together weren’t just a tactical alliance; they were distributed cognitive systems that could infer, plan, and adapt collectively.

Eventually, these groups become the substrate for culture, language, and norms, as tools for synchronizing models across individuals. In humans this shared modeling crystallized into stories, rituals, and rules, the dynamics of reciprocal modeling scale up again, from tribe to civilization.