This post is a continuation of Entropic Gravity Part 1: Diffusion in non-uniform time and space

Classical Diffusion in Inhomogeneous Time

One of the consequences of GR is gravitational time dilation (for a refresher watch Interstellar, it's a good movie), i.e. time runs slower near massive objects. Outside of a spherical mass, the local rate of the flow of time goes like , leading to a rate of flow of time dependent on the radial distance. This is what I'm going to build towards, but first we should build some intuition on some simple examples.

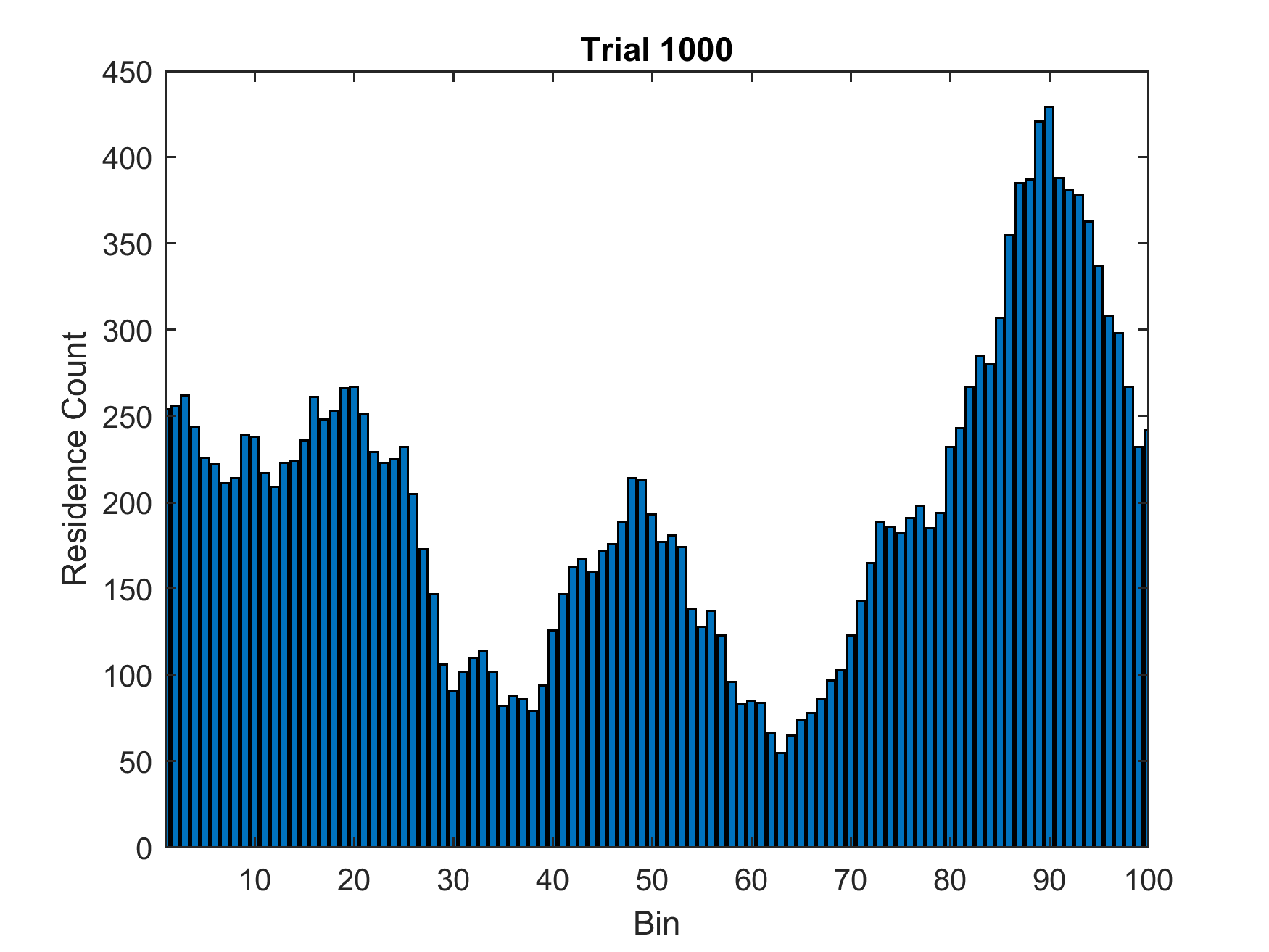

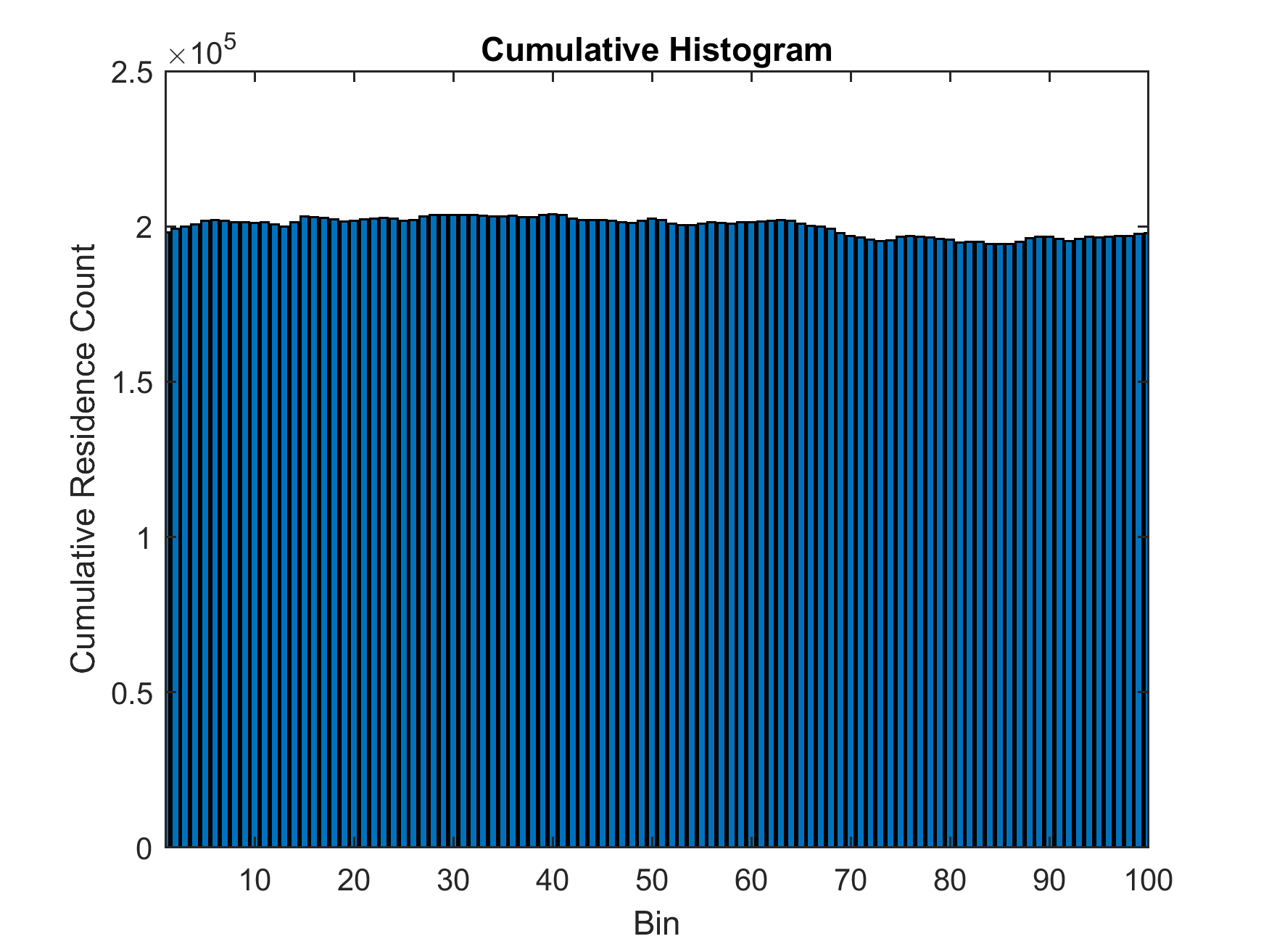

First, let's explore what effect different rates of flow of time have on a particle diffusing on a 1D ring. As a baseline, here's simple Monte-Carlo simulation using an unbiased random walk with homogeneous time flow in MATLAB (20000 time steps per trial, 1000 trials) and plotting the histogram of where the particle is located at each step shows the expected uniform distribution.

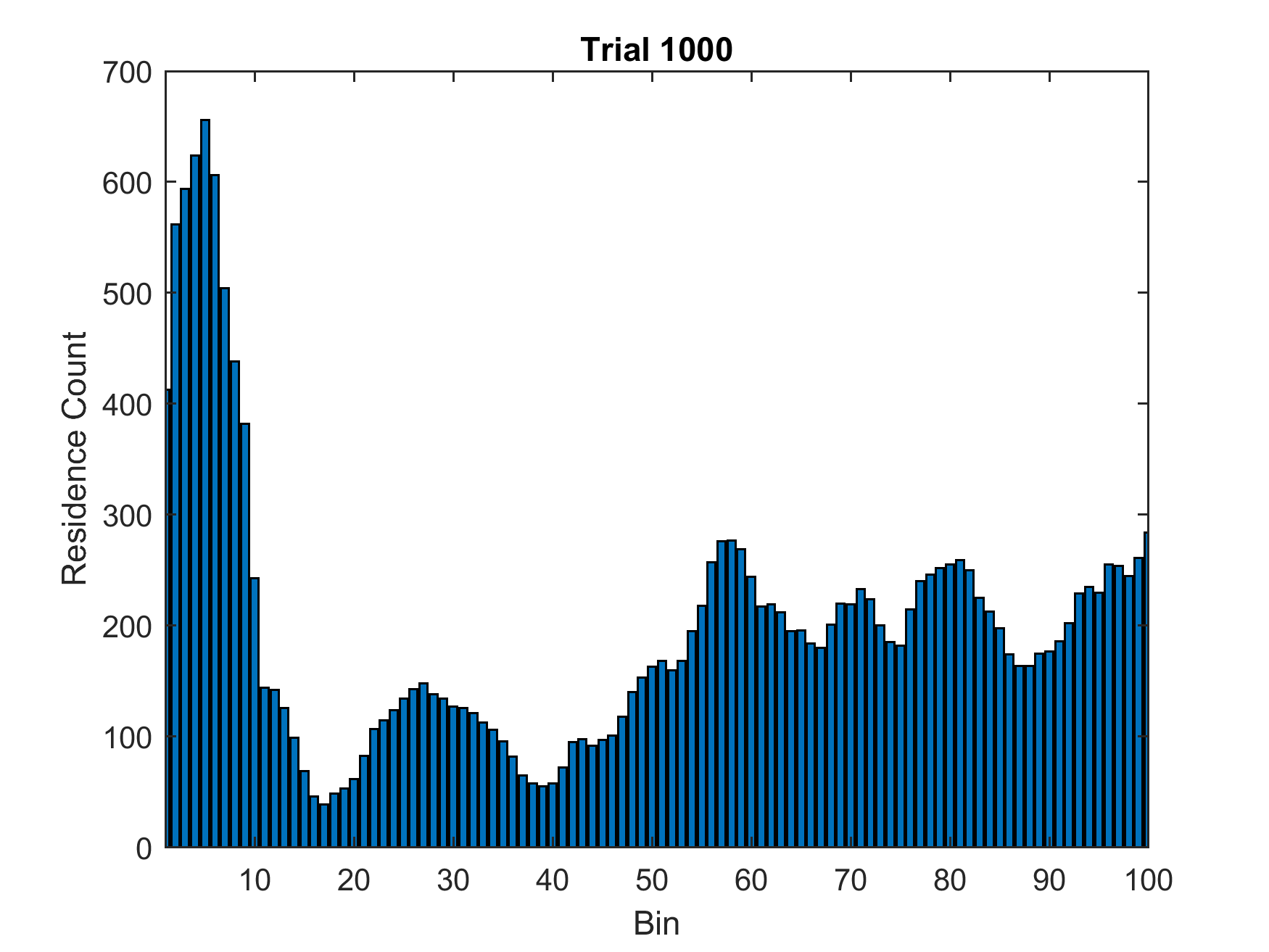

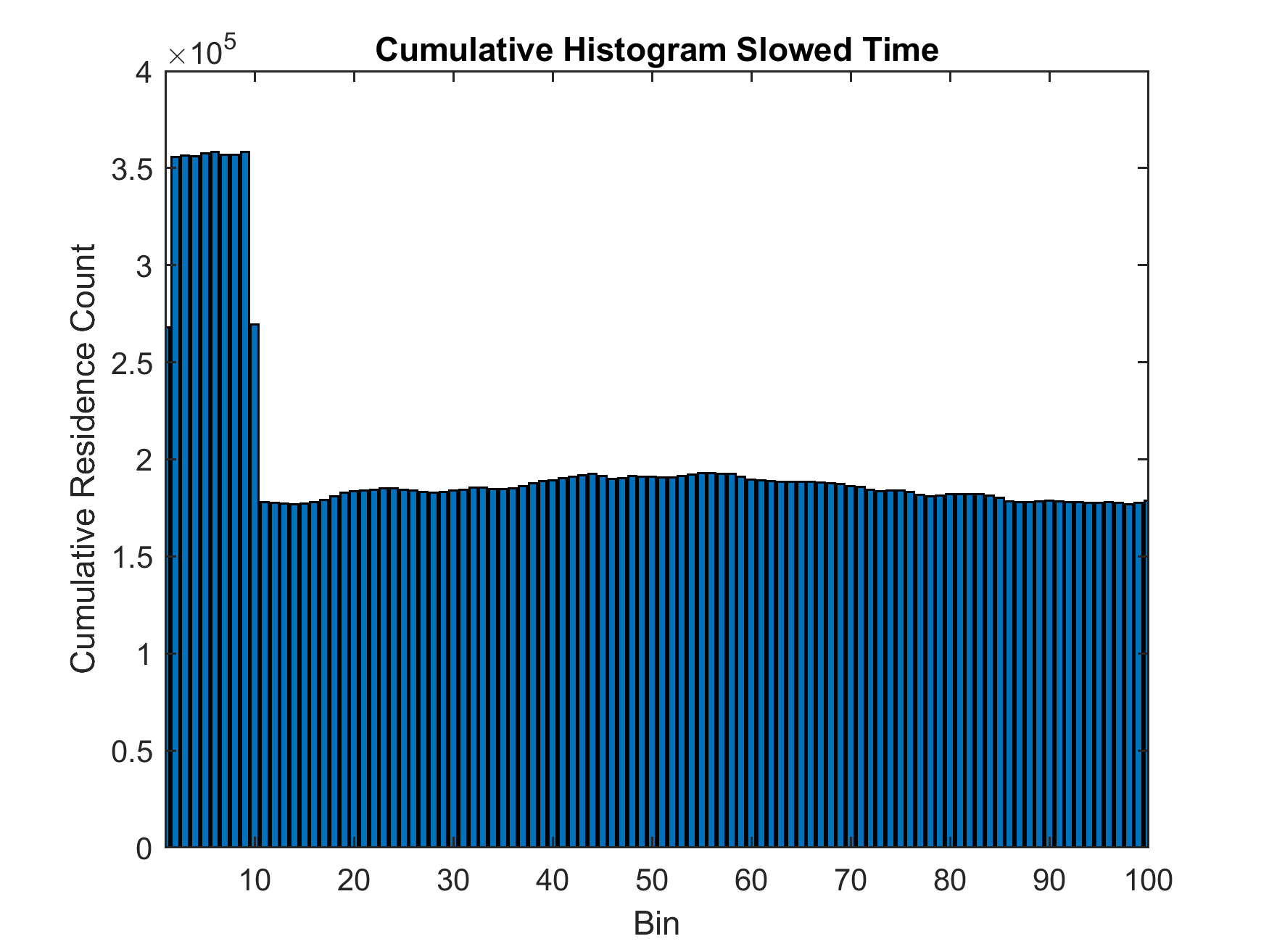

So what effect do variations in the flow of time have on diffusing particles? To make the flow of time inhomogeneous, I picked the first 10 bins on the ring and made the random walk in that region only update once for every two updates on the rest of the ring. Running the same Monte-Carlo simulation now yields a different result: The particle on average spends twice as much time in the region with slowed time.

This simulation can be run with many different amounts of time dilation (or time-dilation factors), but it always yields the same result:

The probability of finding a particle in a bin with slowed time relative to a bin outside that region is directly proportional to the amout of time dilation,

so let's try to generalize this point. We can add the effect of time dilation to the Fokker-Planck equation for

diffusion by using the chain rule on coordinate time (local flow of time) and use proper time instead, or by incorporating the slower rate of passage of time

into the diffusion constant. I will use the latter approach.

We start from the usual one-dimensional Fokker–Planck equation written in proper time (the particle’s own clock, wherever it is):

The outside world measures coordinate time . The two clocks are linked by the time-dilation factor:

Or alternatively:

Because advances more slowly where is large, the effective diffusion coefficient seen in coordinate time is:

Replacing with gives the Fokker–Planck equation in coordinate time:

In equilibrium, , so the equation becomes:

Integrating twice (and assuming reflecting boundaries so the net probability flux is zero) gives:

Substituting gives the final result:

Or in other words:

This is a general statement of what the earlier Matlab simulations showed, namely that regions with slowed time lead to a higher probability of finding a particle inside.

From a classical thermodynamical perspective, we could interpret this result as stemming from a potential that attracts the particle to this region. Adding this potential to a Fokker-Planck equation (commonly called the Smoluchowski equation in this form) with homogeneous flow of time gives us:

for the stationary distribution.

The potential that fulfills this condition can be found by integrating both sides:

Exponentiating and plugging in the partition function for the constant of integration leads to the standard form of the Boltzmann distribution in this choice of coordinates:

And we get an expression for the apparent entropic potential energy as a function of :

So what looks like diffusion or a random walk in one set of coordinates (using local proper time everywhere) is consistent with an entropic force and associated potential in another set of coordinates (using the same coordinate time everywhere).

Classical Diffusion in Inhomogeneous Space

When it’s space—rather than time—that is stretched or compressed, the intuition is even simpler than in the time-dilation case. For a unit of distance in stretched space , the stretch factor tells you how the coordinate length really corresponds to in proper distance. The stationary probability density in the coordinate then is weighted each by its local :

So a region where space is stretched () collects proportionally more probability mass, while a compressed region () gets proportionally less.

Diffusion under a Schwarzschild Metric (unfinished)

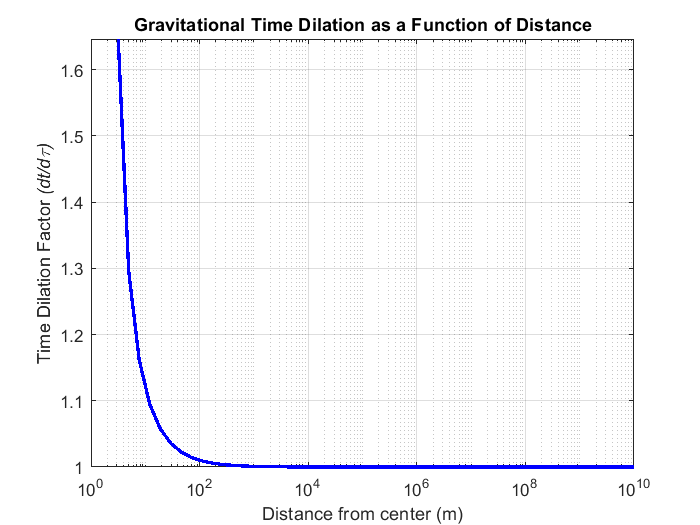

Given that the probability increase of finding a particle in some region (p_observed) is proportional to , we can write for the arising entropic potential , and using gravitational time dilation :

formula

The first couple terms of the Taylor expansion of this formula for give us:

formula

Using the from before we can place a massive object inside of the ring of our 1D-diffusing particle (off-center to introduce inhomogeneous rates of time). Running the Monte-Carlo simulation again yields a result that is in agreement with the previous simulation: When the particle is closer to the mass, it experiences more time dilation leading to an increased apparent entropy than when it is further away.

[image of slowed-time distribution with offset mass.]

To quantiy the increased probability of finding the particle there, we can plot the time_dilation_factor (proportional to probability) vs. distance. For reference, I've included the Newtonian gravitational potential for the same system given by gravitational_potential = -1e-4 ./ (distances).

It is important to point out that the Einsteinian gravitational potential [Formula] matches the formula for the distance dependent probability [formula], and the Newtonian case can be derived from either by taking the first order MacLaurin series either: [Formula].

The distance dependent apparent entropy goes as [Formula] and calcluating the entropic force due to the entropy gradient gives [Formula], or [Formula] by taking a MacLaurin series matching Newtonian gravity. Simulating a 3D-random walk around the massive object shows the particle is more likely to be found the mass and integrating the probability by distance yields a distribution that matches the classical gravitational potential. While this is an interesting result, gravity acts on individual particles that don't diffuse through Brownian motion or collisions with nearby particles.

Additional Comments:

Notes on Temperature in GR

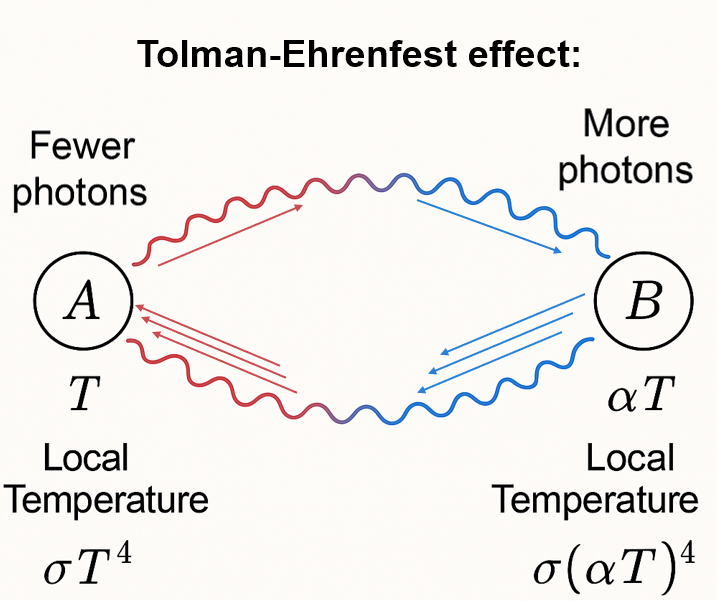

While writing this post I learned something very interesting about temperature in GR: temperature actually becomes observer-dependent due to differences in the local flow of time. Let's look at two observers, and . sits far away from a massive object, where proper time aligns with coordinate time (). is located much closer to the object with . At thermal equilibrium the flux of blackbody radiation from traveling to will exactly balance out with the flux from to .

However, photons energies going from to are redshifted by a factor and their emission rates (per 's coordinate time) appear decreased by . In total the observed power at is reduced and redshifted. The reverse is true for photons going from to : from 's perspective energies are blueshifted by and emission rates are increased by .

Now suppose B's local temperature is fixed at . The power emitted locally from his frame is:

If and are at the same temperature , the ratio of energy flux over would be

This means they're not in thermal equilibrium as there is a net energy flux.

To achieve equilibrium, each region must have a temperature such that the flux is equal. As radiated blackbody power follows

the Stefan-Boltzmann law and , there must be a temperature gradient:

This means 's absolute temperature must be higher than 's: . What we've derived here is the Ehrenfest–Tolman law, which in general can be written in terms of the space-time metric :

or

is called also the redshifted temperature. So in simple words, and are at different temperatures to compensate for photon energy shifts and differences in emission rates due to time dilation.

In the Fokker–Planck equation from earlier we've ignored this effect. What we wrote was:

and

However, our formulation of including time dilation in the diffusion constant turns out to be equivalent, which we can see by relating the diffusion constant to the local temperature using the Stokes-Einstein relation:

Why would Entropy be Affected by Time Dilation?

While I think the fact that regions of stretched space have higher entropy (more states) is fairly intuitive, since we're used to thinking of entropy being proportional to volume as an extensive property. But why would entropy care about time? I think there are a couple of interesting perspectives on this.

One definition that is more general than the usual Gibbs entropy is Gibbs-Shannon entropy, which counts states through an integral over phase space density with respect to position and momentum coordinates (with or without the quantum normalization factor/ state volume):

Now for the interesting part: time dilation in curved space-time stretches proper time. A slower rate of time corresponds to a stretching in the momentum space direction. Since phase space volume is measured as , and proper time affects , then a slower expands the volume element in momentum.

In other words: Time dilation increases the density of available microstates in momentum space.

More microstates = more entropy = more probability weight accumulates there. From a flat-space viewpoint, this looks like a gravitational potential, but thermodynamically it’s just where entropy is highest.

The geometry of the phase space is related to the space-time metric, and the invariant phase space volume element in curved space-time can be calculated as:

Here, the term incorporates the spatial geometry, and the delta function enforces the mass-shell constraint. This formulation makes it clear that the space-time metric directly shapes the density of states.

(Note to self: Make sure I understand this in more detail. Volume element over cotangent bundle of spacetime. Spme sources are: https://physics.stackexchange.com/questions/83260/lorentz-invariant-integration-measure https://arxiv.org/abs/2106.09235 https://www.icranet.org/veresh/RKT.pdf https://physics.stackexchange.com/questions/167813/proving-the-lorentz-invariance-of-the-lorentz-invariant-phase-space-element )

So the particle isn’t pulled down by gravity—it’s drawn into regions with more available phase space, i.e., where the density of states is higher. That’s what an entropic force is.

This is why I think of gravity not as a classical force from curved geodesics, but as a statistical bias toward regions of higher microscopic degeneracy. And it's why the Fokker–Planck diffusion equation with time-dilation–modulated naturally leads to the Tolman–Ehrenfest equilibrium. All the entropy accounting is built into the coordinates, and the free energy remains the same.